In the previous post, I covered a simple but much used method for simulating random variables or, rather, generating random variates. To simulate a random variable, the method requires writing down, in a tractable manner, the inverse of its cumulative distribution function.

But in the case of the normal (or Gaussian) distribution, there is no closed-form expression for its cumulative distribution function nor its inverse. This means you cannot, in an elegant and fast way at least, generate with the inverse method a single normal random variable using a single uniform random variable.

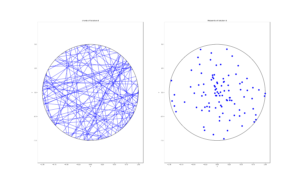

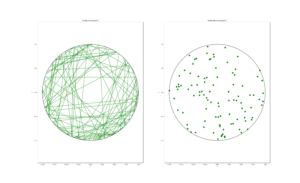

Interestingly, however, you can generate two (independent) normal variables with two (independent) uniform variables using the Box-Muller method, originally proposed by George Box and Mervin E. Muller. This approach uses the inverse method, but in practice it’s not used much (see below). I detail this method because I find it neat and it highlights the connection between the normal distribution and rotational symmetry, which has been the subject of some recent 3Blue1Brown videos on YouTube.

(This method was also used to simulate the Thomas point process, which I covered in a previous post.)

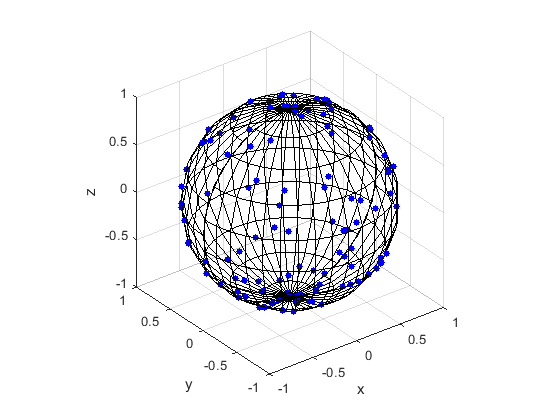

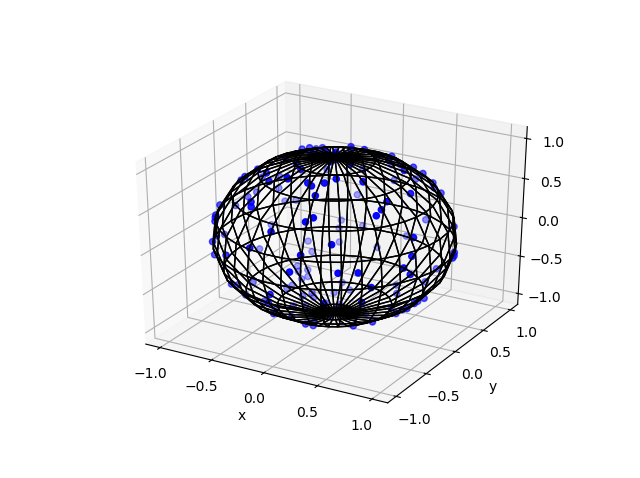

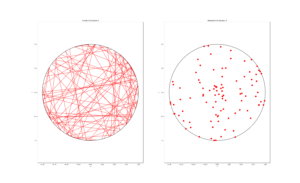

Incidentally, this connection is also mentioned in a previous post on simulating a Poisson point process on the surface of a sphere. In that method post, Method 2 uses an observation by the Muller that normal random variables can be used to position points uniformly on spheres.

I imagine this method was first observed by transforming two normal variables, instead of guessing various distribution pairs that would work. Then I’ll sketch the proof in the opposite direction, though it works in both directions.

Proof outline

The joint probability density of two independent variables is simply the product of the two individual probabilities densities. Then the joint density of two standard normal variables is

$$\begin{align}f_{X,Y}(x,y)&=\left[\frac{1}{\sqrt{2\pi}}e^{-x^2/2}\right]\left[\frac{1}{\sqrt{2\pi}}e^{-y^2/2}\right]\\&=\frac{1}{{2\pi}}e^{-(x^2+y^2)/2}\,.\end{align}$$

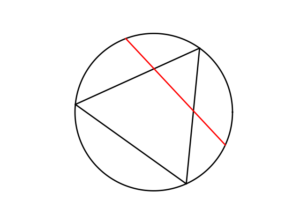

Now it requires a change of coordinates in two dimensions (from Cartesian to polar) using a Jacobian determinant, which in this case is \(|J(\theta,r)=r|\).1Alternatively, you can simply recall the so-called area element \(dA=r\,dr\,d\theta\). giving a new joint probability density

$$f_{\Theta,R}(\theta,r)=\left[\frac{1}{\sqrt{2\pi}}\right]\left[ r\,e^{-r^2/2}\right]\,.$$

Now we just identify the two probability densities. The first probability density corresponds to a uniform variable on \([0, 2\pi]\), whereas the second is that of a Rayleigh variable with parameter \(\sigma=1\). Of course the proof works in the opposite direction because the transformation (between Cartesian and polar coordinates) is a one-to-one function.

Algorithm

Here’s the Box-Muller method for simulating two (independent) standard normal variables with two (independent) uniform random variables.

Two (independent) standard normal random variable \(Z_1\) and \(Z_2\)

- Generate two (independent) uniform random variables \(U_1\sim U(0,1)\) and \(U_2\sim U(0,1)\).

- Return \(Z_1=\sqrt{-2\ln U_1}\cos(2\pi U_2)\) and \(Z_2=\sqrt{-2\ln U_1}\sin(2\pi U_2)\).

The method effectively samples a uniform angular variable \(\Theta=2\pi U_2\) on the interval \([0,2\pi]\) and a radial variable \(R=\sqrt{-2\ln U_1}\) with a Rayleigh distribution.

The algorithm produces two independent standard normal variables. Of course, as many of us learn in high school, if \(Z\) is a standard normal variable, then the random variable \(X=\sigma Z +\mu\) is a normal variable with mean \(\mu\) and standard deviation \(\sigma>0\) .

The fall of the Box-Muller method

Sadly this method was typically not used, as historically computer processors were slow at doing calculations involving the necessary mathematical functions. To avoid these functions researchers developed and employed other methods such as the ziggurat algorithm.

Also, although processors can now do such calculations much faster, many languages, not just scientific ones, come with functions for generating normal variables. Consequently, there had not been much need in implementing this method.

Update: The return of the Box-Muller method

The above conventional wisdom has changed in recent years as processors can now (on a hardware level) readily evaluate such functions. (I had been waiting to see if certain libraries would be re-written by using the Box-Muller method, but why bother if the old ones work so well?) When I used the term “processors”, I had central processor units (CPUs) in mind, but in recent years graphically processor units (GPUs) have become widely popular.

In a comment on this post, it pointed out that the Box-Muller method is the preferred choice for GPUs, as evidenced by its implementation in Nvidia’s CUDA library. The reason is GPUs do not handle well loops and branches in algorithms, so you should use methods that avoid these algorithmic steps. And the Box-Muller method is one that does just that.

The NVDIA website says:

Because GPUs are so sensitive to looping and branching, it turns out that the best choice for the Gaussian transform is actually the venerable Box-Muller transform

Further reading

Websites

Many websites detail this method. Here’s a couple:

- https://mathworld.wolfram.com/Box-MullerTransformation.html

- https://en.wikipedia.org/wiki/Box%E2%80%93Muller_transform

- https://quantgirl.blog/box-muller-and-marsaglia-bray/

For details on implementing this method on Nvidia GPUs, look at this page on the Nvidia website for using the CUDA library:

Papers

The original paper (which is freely available here) is:

- 1958 – Box and Muller, A Note on the Generation of Random Normal Deviates.

Another paper by Muller connects normal variables and the (surface of a) sphere:

- 1959 – Muller, A note on a method for generating points uniformly on n-dimensional spheres.

Books

Many books on stochastic simulation cover the Box-Muller method. The classic book by Devroye with the descriptive title Non-Uniform Random Variate Generation covers this method; see Section 4.1. There’s also the Handbook of Monte Carlo Methods by Kroese, Taimre and Botev; see Section 3.1.2.7. Ripley also covers the method (and he makes a remark with some snark that many people incorrectly spell it the Box-Müller method); see Section 3.1. The book Stochastic Simulation: Algorithms and Analysis by Asmussen and Glynn also mention the method and a variation by Marsaglia; see Examples 2.11 and 2.12.