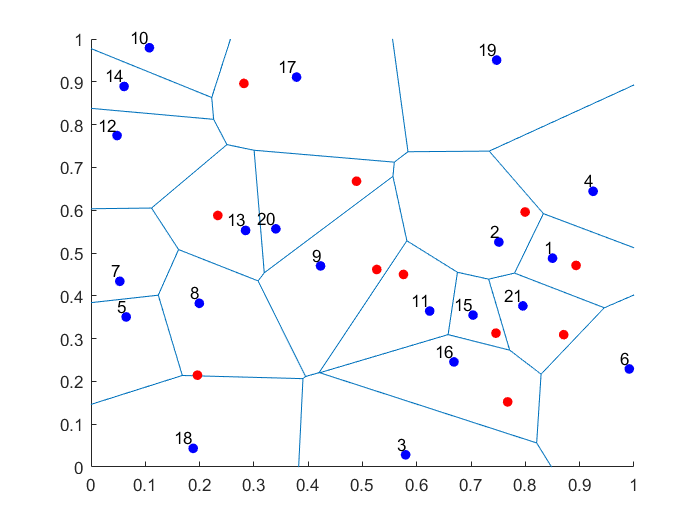

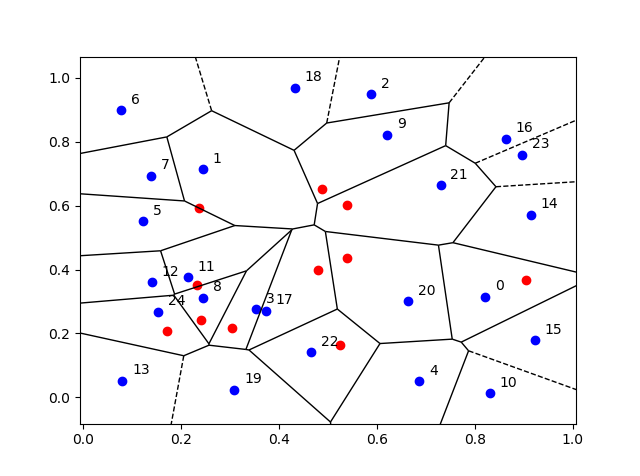

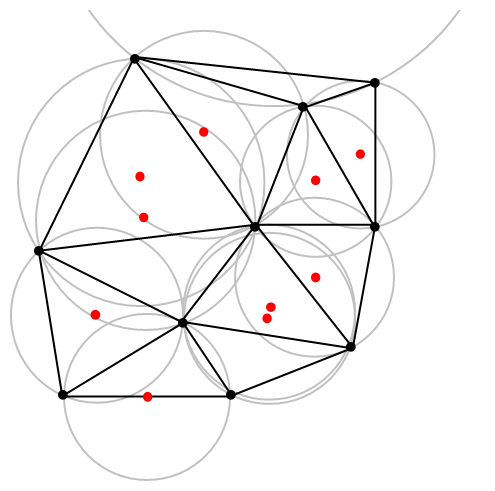

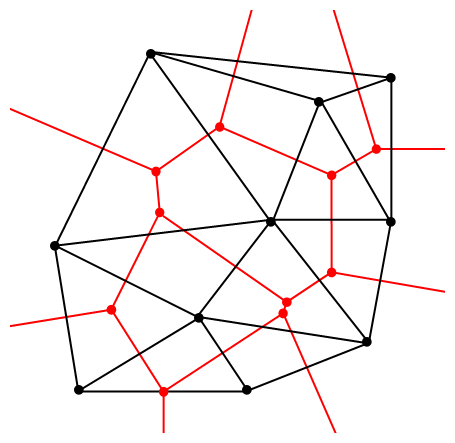

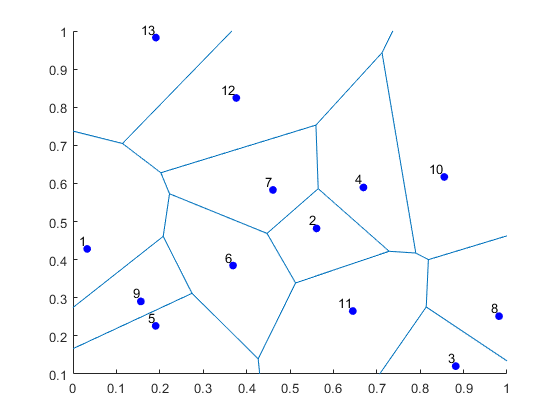

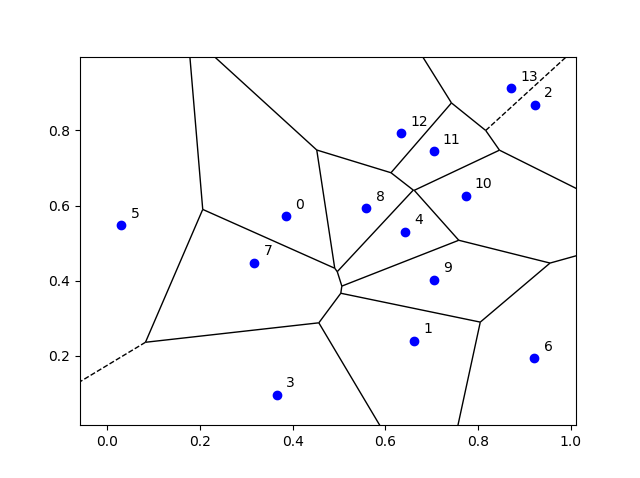

A non-random collection of points located on some space is called a point pattern in spatial statistics. Informally, you can interpret a point process as a collection of random points scattered over some underlying mathematical space, meaning each outcome or realization of a point process forms a point pattern. Using such intuition gets you pretty far. But if we want to be mathematically formal, we need to use precise mathematical objects.

Historically, there’s a couple main interpretations of a point process, which is also called a random point field. But now a standard mathematical approach is used.

In this post I will cover the main definitions, terminology and some of the notation used in the theory and application of point processes. (I refer to the next post for more on the point process notation.) I won’t delve too much into the precise details, giving just an outline with references at the end.

Underlying mathematical space

We consider a point process defined on some underlying mathematical space \(\mathbb{S}\), which is sometimes called the carrier space or state space. We further assume that the space is measurable by having a Borel \(\sigma\)-algebra \(\mathcal{S}\).

In practice, the underlying space is usually the real line \(\mathbb{R}\), the plane \(\mathbb{R}^2\), or some other familiar mathematical space like a square lattice. More generally, a point process can be defined on any metric space, allowing for the notion of distance. Mathematicians study point processes in even more general settings by defining them on, for example, a locally compact second countable Hausdorff space. But such generality is typically not needed for most people and their applications.

Modern probability approach

In modern probability theory, if we want to define a random mathematical object, we start with a random experiment in the context of a probability space or triple \((\Omega,\mathcal{A},\mathbb{P})\), where:

- \(\Omega\) is a sample space, which is the set of all possible outcomes;

- \(\mathcal{A}\) is a \(\sigma\)-algebra or \(\sigma\)-field, which is a family of events (subsets of \(\Omega\));

- \(\mathbb{P}\) is a probability measure, which assigns probability to each event in \(\mathcal{A}\).

To gain some intuition, David Williams says to imagine that Tyche, Goddess of Chance, chooses a sample point \(\omega\in\Omega\) at random according to the law \(\mathbb{P}\) such that an event \(A\in \mathcal{A}\) has a probability given by \(\mathbb{P}(A)\), where we understand probability with our own intuition. To bring things back to Earth, we can also choose a sample point \(\omega\in\Omega\) by using some physical experiment, as long as it is truly random, such that the probability of \(A\in \mathcal{A}\) happening is given by \(\mathbb{P}(A)\).

Now we can define random objects by using a certain measurable function or mapping that maps to a suitable space of objects. For example, a real-valued random variable is a measurable function from \(\Omega\) to the real line; a random matrix is a measurable function from \(\Omega\) to some space of matrices; and, as John Kingman quips in his classic book Poisson Processes, a random elephant is just a measurable function from \(\Omega\) to some suitable space of elephants.

But what space should we use for a point process? To answer that, we need to interpret a point process as a suitable mathematical object.

Interpretation

There are different ways to interpret a point process, which is often denoted by a single letter, for example, \(N\) or \(\Phi\). (The convention of using the Greek letter \(\Phi\) comes from German mathematicians, but some prefer not to use \(\Phi\), as it’s often used for the normal cumulative distribution function.) If the point process is defined on a space like the real like, where the points can be ordered, then additional interpretations exist, but mathematicians assume the order of the points does not matter, limiting the possible interpretations.

Random closed set

In mathematics a collection of distinct things is formalized by a mathematical object called a set. We say that a set contains elements or members, and a set never contains more than one of the same element. Sets are fundamental objects with set concepts and notation being found everywhere in mathematics.

We now define a common type of point process, which we can formalize with the concept of a set.

A point process is simple if the probability of all points of the point process being distinct is one.

In other words, for a simple point process, there is zero probability of two or more of its points being found in the same location of the underlying state space \(\mathbb{S}\), which brings us to our first interpretation.

A simple point process can be interpreted as a random closed set.

More specifically, we can interpret a simple point process as a (measurable) mapping from a sample space \(\Omega\) to the space of closed sets \(\mathbb{F}\), meaning that each realization of a simple point process is a closed set \(\phi\in \mathbb{F}\).

Point process theory has adopted the notation from set theory. For example, if we want to say some point, which we denote by \(x\), of the underlying space \(\mathbb{S}\) belongs to or is a member of a simple point process \(\Phi\), then we can simply write \(x\in \Phi\). We can also write a point process as \(\{x\}_i\) to highlight its interpretation as a random closed set of points.

The theory of random sets, which is a field of study in its own right, can be applied to simple point processes owing to this interpretation. But for non-simple point processes, we need another point process interpretation.

Random measures

Modern integration theory is based on measure theory, which revolves around the concept of a set function known as a measure. In addition to a couple of other properties, when you apply this function to a set, it gives a number, such as a integer or real number. For example, a counting measure gives you the number elements in a set, which could be a subspace \(B\), such as a region of the plane \(B \subset \mathbb{R}^2\). (The letter \(B\) is often used for sets in measure and probability theory as it’s typically assumed that the sets are Borel sets, which form a very large family of well-behaved sets in terms of measurability.) The concept of a counting measure gives us an interpretation of a point process, which has now become the standard one.

A point process can be interpreted a random counting measure.

More specifically, we define a point process as a mapping from a sample space \(\Omega\) to the space of counting measures \(\mathbb{M}\), meaning that each realization of a point process is a counting measure \(\phi\in \mathbb{M}\). Some mathematicians even say a point process is just another name for a random counting measure. The techniques of random measure theory provide alternative (and arguably main) approach to study point processes.

This standard interpretation of a point process means that point process theory borrows heavily notation from measure theory and calculus. For example, in measure theory we can write a (non-random) counting measure as \(\#\), so \(\#(B)=n\) is how we write that the set \(B\) contains \(n\) points. We can then write the the number of points of a point process \(\Phi\) located in some (Borel) set \(B\) as \(N(B) =\#( B \cap \Phi)\), where \(N(B)\) is a random variable. In this expression, the point process is denoted by \(\Phi\), while\(N(B)\) is the number of points of \(\Phi\) in \(B\), meaning \(N\) is a random counting measure .

Important concepts

In the theory point processes, like any other field of mathematics, there are various important concepts for understanding and proving various results. Without going in the details, these include shot noise, Campbell’s theorem, Laplace functional, Palm calculus, void probability, and factorial moment measures. In future posts, I’ll detail some of these concepts.

Further reading

There are many, many books covering the fundamentals of modern probability theory, including those (in roughly order of difficulty) by Grimmett and Stirzaker, Karr, Rosenthal, Shiryaev, Durrett, and Billingsley. A very quick introduction is given in this web article.

For point process theory, Wikipedia is a good start place to start, particularly the articles on point processes and point process notation, though the former is too mathematical for a Wikipedia article. The standard reference on point processes was the An Introduction to the Theory of Point Processes by Daley and Vere-Jones, which now spread across volume one and two, but I would not learn the subject with these books.

The classic text Stochastic Geometry and its Applications by Chiu, Stoyan, Kendall and Mecke covers point processes and the varying notation in Chapters 2 and 4. The similar material is covered in the previous edition by Stoyan, Kendall and Mecke. A more mathematical book that covers point processes and random sets is Stochastic and integral geometry by Schneider and Weil. Point processes are also covered in a recent readable tutorial on Palm calculus and Gibbs point processes, which will be the subject of another post.

Spatial statistics builds of point process theory, giving good texts for learning the basics of point processes. I suggest the lectures notes by Baddeley, which form Chapter 1 of these published lectures, edited by Baddeley, Bárány, Schneider, and Weil. I always recommend the book Spatial Point Patterns: Methodology and Applications with R written by spatial statistics experts Baddeley, Rubak and Turner, which covers the spatial statistics (and point process simulation) R-package spatstat. Another good book is Statistical Inference and Simulation for Spatial Point Processes by Møller and Waagepetersen, but there are many more.

In recent years, point process theory (under the guise of stochastic geometry) has been used to model wireless networks. An early treatment of the subject is the two-volume textbook Stochastic Geometry and Wireless Networks by Baccelli and Błaszczyszyn, where the first volume is on theory and the second volume is on applications. Haenggi wrote a very readable introductory book called Stochastic Geometry for Wireless networks.

For the more mathematically brave, there’s the recent book Random Measures, Theory and Applications by Kallenberg who is the authority of the subject, having written earlier books, but these have now become obsolete with this recent publication. Another mathematically challenging book is The Theory of Random Sets by Molchanov, but it has less emphasis on point processes.

A very recent book (manuscript) is Random Measures, Point Processes, and Stochastic Geometry by Baccelli, Błaszczyszyn, and Karray, which contains much material, including new results and proofs. Finally, Last and Penrose wrote a mathematical monograph Lectures on the Poisson process, which is freely available online here.